When you store a file it is divided into blocks of fixed size, in case of local file system these blocks are stored in a

single system. In a distributed file system these blocks of the file are stored in different systems across the cluster.

Hadoop framework has its own distributed file system known as Hadoop Distributed File System (HDFS) for handling huge files.

Hadoop Distributed File System

HDFS is designed to support large files, by large here we mean file size in gigabytes to terabytes. HDFS is also designed

to run on commodity hardware, working in parallel.

With this design what you expect from HDFS is-

- Fault tolerance. Since low cost hardware (commodity hardware) is used in the cluster so chance of a node going

dysfunctional is high. Also a block of data may be corrupted. So detection of faults and quick, automatic recovery from

them is a core architectural goal of HDFS.

- Since blocks of file are stored across the cluster so aggregate band width should be high.

- HDFS should be able to scale to hundreds of nodes in a single cluster.

What is HDFS block size

As already stated file is divided into several blocks for storage. The block is amount of data that you read or write

from a file system. In local file system block size is generally small. For example in Windows OS block size is 4 KB.

Since HDFS works on large data sets which are stored across nodes in a cluster having a small block size will create problems.

While reading the file a lot of time will be spent on searching the nodes where blocks reside, connecting to the nodes and

looking for that block in the drive of that node. In order to make the time spent on these activities negligible in comparison

to the amount of data read the block size is comparatively larger in HDFS.

Block size is 128 MB by default in Hadoop 3.x versions (same as Hadoop 2.x), it was 64 MB in Hadoop 1.x versions.

As example- If you have file of size 200 MB then it will be split into two blocks of 128 MB and 72 MB respectively. Then these

blocks will be stored on different machines in the cluster.

Note here that if file split is smaller than the block size then it won’t occupy the whole block size in the drive. The split

whose size is 72 MB will take only 72 MB disk space not the whole 128 MB.

Changing the HDFS block size property

If you want to change the default block size of 128 MB in Hadoop you can edit the /etc/hadoop/hdfs-site.xml file in your hadoop

installation directory.

The property you need to change is dfs.block.size.

<property>

<name>dfs.block.size<name>

<value>134217728<value>

<description>HDFS block size<description>

</property>

Note that block size is given in bits here- 128 MB = 128 * 1024 * 1024

HDFS Architecture - NameNode and DataNode

HDFS follows a master/slave architecture. In An HDFS cluster there is a single NameNode, a master server that manages the

file system namespace and regulates access to files by clients.

DataNode runs on every node in the cluster, datanodes manage storage attached to the nodes that they run on.

Metadata about the file is stored by the NameNode. It also determines the mapping of blocks to DataNodes. File system

namespace operations like opening, closing, and renaming files and directories are executed by NameNode.

Actual data of the file is stored in Datanodes. DataNodes are responsible for serving read and write requests from the

file system’s clients.

HDFS design features

Some of the design features of HDFS and what are the scenarios where HDFS can be used because of these design features are as follows-

1. Streaming data access- HDFS is designed for streaming data access i.e. data is read continuously. HDFS is more suitable for batch processing

rather than interactive use by users. The emphasis is on high throughput of data access rather than low latency of data access.

Having large block size helps here as the seek time to the start of the block in the drive is negligible in comparison to the

data read.

2. Single coherency model- HDFS is designed with an idea that files will be written once and then read many times.

So a file in HDFS once stored can't be modified arbitrarily. You can't have random writes in the file. Though appends and

truncates are permitted, you can only append at the end of the file not at any arbitrary point.

HDFS replication

When you store data blocks across a cluster of commodity hardware then there is a high chance of the node in a node

going dysfunctional, data block getting corrupted or a no connection to a node because of network problem. HDFS has to

fault tolerant and highly available despite these challenges. One of the way these features are ensured is through replication

of data.

In HDFS, by default, each block is replicated thrice. So, each file split will be stored in three different DataNodes.

For selecting these DataNodes Hadoop framework has a replica placement policy.

With this replication mechanism in place, if a DataNode storing a particular is dysfunctional another DataNode having that

redundant block can be used instead.

As Example – There are two files A.txt and B.txt which are stored in a cluster having 5 nodes. When these files are put

in HDFS, as per the applicable block size, let's say both of these files are divided into two blocks.

A.txt – block-1, block-2

B.txt – block-3, block-4

With the default replication factor of 3, block replication across 5 DataNodes can be depicted as follows–

Changing the default replication factor in Hadoop

If you want to change the default replication factor of 3 in Hadoop you will have to edit the /etc/hadoop/hdfs-site.xml in your

hadoop installation directory.

The property you need to change is dfs.replication.

<property>

<name>dfs.replication</name>

<value>3</value>

</property>

That's all for this topic What is Hadoop Distributed File System (HDFS). If you have any doubt or any suggestions to make please drop a comment. Thanks!

Related Topics

-

Introduction to Hadoop Framework

-

Replica Placement Policy in Hadoop Framework

-

NameNode, DataNode And Secondary NameNode in HDFS

-

HDFS Commands Reference List

-

HDFS High Availability

You may also like-

-

What is Big Data

-

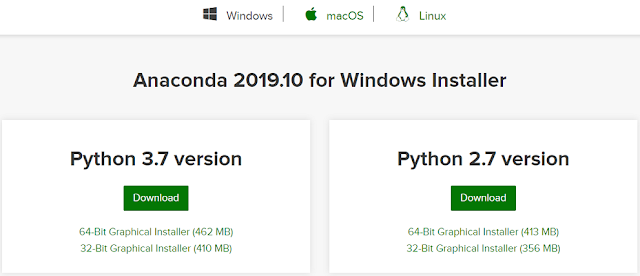

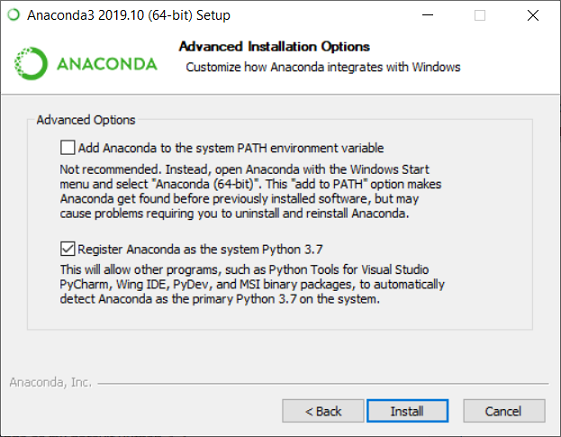

Installing Hadoop on a Single Node Cluster in Pseudo-Distributed Mode

-

Installing Ubuntu Along With Windows

-

Word Count MapReduce Program in Hadoop

-

File Write in HDFS - Hadoop Framework Internal Steps

-

Java Program to Read File in HDFS

-

Java Collections Interview Questions

-

Garbage Collection in Java